At Orca AI’s event, From Hype to Hard Decisions: AI, Risk, and Decision-Making in a More Volatile Maritime Landscape, held during Singapore Maritime Week, industry leaders addressed how AI is being applied in real operations. The panel brought together Nils Aden (Managing Director, Harren Group), Stavros Gyftakis (CFO, Seanergy Maritime Holdings), Captain Raghav Gulati (Anglo-American), and Yarden Gross (CEO, Orca AI). The discussion focused on risk, decision-making, and how crews operate in an environment where data is less stable and systems are more complex.

Risk is shifting from external threats to cognitive pressure

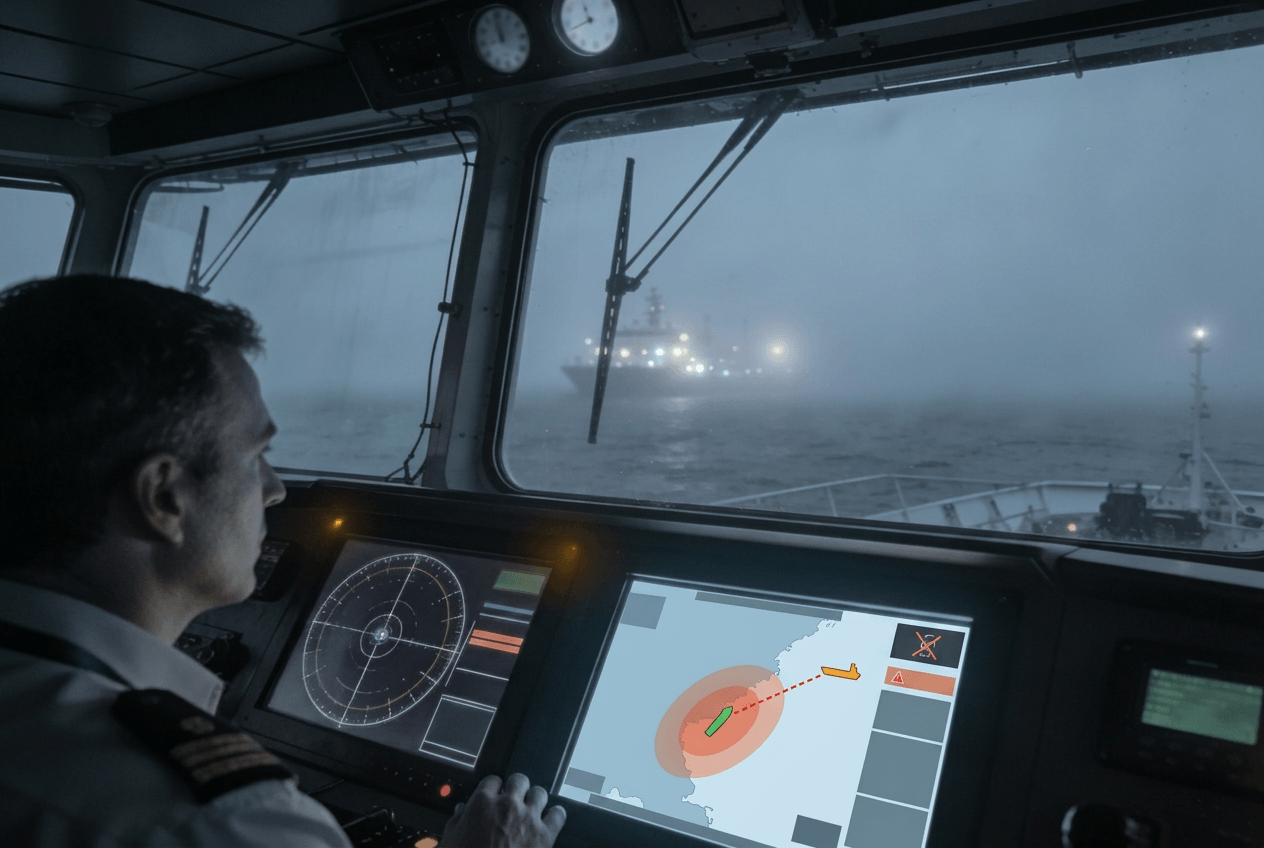

Captain Raghav Gulati of Anglo-American described a shift in how risk is created and managed. GPS spoofing and AI-driven interference are reducing confidence in navigation data. As a result, crews are dealing with increasing pressure from within their own decision-making processes.

As he explained, “The risk that gets created it’s no more external because we are the ones who are creating those risks it’s more cognitive now.” Crews are required to process multiple systems that do not always align, increasing cognitive load and decision fatigue.

AI investment fails without organizational readiness

The panel made it clear that AI adoption cannot start with financial outcomes alone.

Nils Aden, Managing Director of Harren Group, emphasized the importance of organizational readiness and sequencing. “If you start with the bottom line first then you probably miss out the right and and actually problem solving smaller steps that you need to take first before you kind of do the very big things and probably lose your organization.” The discussion highlighted that without proper alignment with crew workflows, even strong technology implementations will fail.

Stavros Gyftakis, CFO of Seanergy Maritime Holdings, reinforced the difference between efficiency gains and safety impact. While some AI tools deliver direct fuel savings, safety systems operate under different conditions. “if something goes wrong on that [safety] side then the impact would be tremendous.” This distinction shapes how operators evaluate and prioritize AI investments across fleets.

Trust in data determines adoption

Trust in data emerged as a central challenge. The panel debated whether bad data or misplaced confidence poses a greater risk. Captain Raghav warned against blindly trusting systems that appear reliable. Yarden Gross, CEO of Orca AI, addressed how trust is built over time. “eventually it’s about people… the AI is a supplement it’s a tool it’s coming to solve problems but eventually it’s about the crew and about the adoption.”

Reliable performance, real-world validation, and alignment with crew expectations determine whether AI is actually used on board.

AI supports decisions, it does not replace crews

The role of AI on the bridge was clearly defined. It is not a replacement for human expertise. Captain Raghav emphasized the need to trust and empower crews: “Seafarers in my opinion are highly skilled and trained people… you have to empower them… and if we can do that intelligently we can definitely give them the ownership”. AI reduces workload and supports decision-making, but accountability and final judgment remain with the crew.

Key takeaways:

The discussion concluded with a clear direction for the industry:

- Risk is shifting from external threats to internal decision pressure, as crews manage conflicting and sometimes unreliable data.

- AI adoption depends on organizational readiness, not just financial return, and must align with how crews operate on the bridge.

- Trust remains the main barrier, requiring systems to prove reliability through real-world performance.

- AI’s role is to support decision-making, reduce cognitive load, and strengthen crew performance, not replace it.